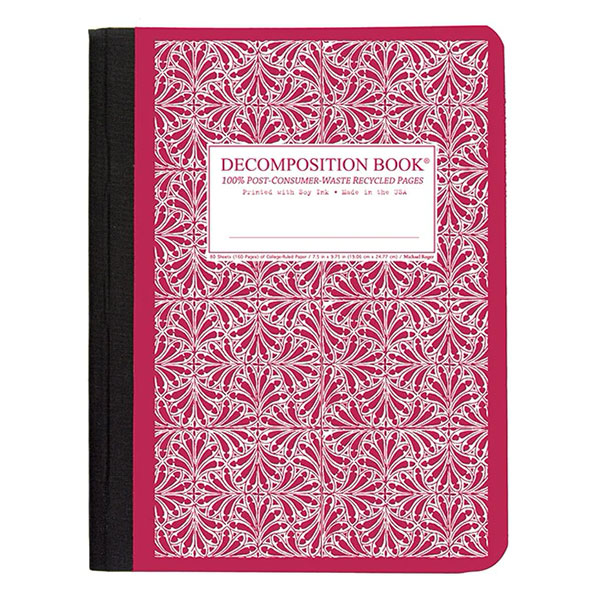

Please, go ahead, and reap the benefits of my life's work. They're great for amping up your desk at home or for gifting to the paper nerd in your own life. These range as they should, from useful and cheap to fancy and luxurious. Here, I've rounded up the 15 best notebooks for different occasions and preferences. High Quality White Paper, Lightly Lined Pages, Soft Matte Cover. Especially Suitable For Both Boys And Girls. There's the work notebook for planning, the night journal for anxious thoughts, the tiny backpack notebook for traveling, and the holy grail notebook for only the most thought-provoking notes and quotes. This Decomposition Notebook Features: Dimensions: Ideal Size 7.5 'X 9.25' 100 Pages. Choose from Same Day Delivery, Drive Up or Order Pickup plus free shipping on orders 35+. To really capitalize on this, I'm a big proponent of notebooks dedicated to certain forms of writing. Shop Target for composition notebook you will love at great low prices.

But a good notebook that fits the situation dulls the pain. I have learned that the actual act of writing-the thinking thoughts and putting them down-is pretty excruciating, no matter how long you've been at it. Over a lifetime of notebooks, I have learned about the world outside and I have learned, above all, about the world inside myself. 7mm, not up for debate unless you are left handed) feels on a fresh piece of paper. Learning about paper weights and constructions, how my favorite pen (a Pilot G-2. I am a lifetime buyer and user of notebooks of every style and flavor, and this journey has been one imbued with learning. We support fine-tuning with Stable Diffusion XL.If there is one thing I am certain of, it is that notebooks are a lifeline. To the best of our knowledge, our current load_lora_weights() should support L圜ORIS checkpoints that have LoRA and LoCon modules but not the other ones, such as Hada, LoKR, etc. We don’t fully support L圜ORIS checkpoints.When images don’t looks similar to other UIs, such as ComfyUI, it can be because of multiple reasons, as explained here.Known limitations specific to the Kohya-styled LoRAs Thanks to for helping us on integrating this feature. If you notice carefully, the inference UX is exactly identical to what we presented in the sections above. Generator=generator, guidance_scale=guidance_scale Prompt=prompt, negative_prompt=negative_prompt, num_inference_steps=num_inference_steps, Negative_prompt = "(deformed, bad quality, sketch, depth of field, blurry:1.1), grainy, bad anatomy, bad perspective, old, ugly, realistic, cartoon, disney, bad propotions" Prompt = "anime screencap, glint, drawing, best quality, light smile, shy, a full body of a girl wearing wedding dress in the middle of the forest beneath the trees, fireflies, big eyes, 2d, cute, anime girl, waifu, cel shading, magical girl, vivid colors, (outline:1.1), manga anime artstyle, masterpiece, offical wallpaper, glint " Monarch Migration Decomposition Book 11.50 SKU: BBJ9781401533083 Price: 11. Pipeline.load_lora_weights( ".", weight_name= "Kamepan.safetensors") All Decomposition Notebooks are Eco friendly, Made in the USA, and printed with Soy Ink. Pipeline = om_pretrained(base_model_id, torch_dtype=torch.float16).to( "cuda")

The greater memory-efficiency allows you to run fine-tuning on consumer GPUs like the Tesla T4, RTX 3080 or even the RTX 2080 Ti! GPUs like the T4 are free and readily accessible in Kaggle or Google Colab notebooks.Ĭopied from diffusers import DiffusionPipelineīase_model_id = "stabilityai/stable-diffusion-xl-base-0.9".You can control the extent to which the model is adapted toward new training images via a scale parameter. □ Diffusers provides the load_attn_procs() method to load the LoRA weights into a model’s attention layers. LoRA matrices are generally added to the attention layers of the original model.Rank-decomposition matrices have significantly fewer parameters than the original model, which means that trained LoRA weights are easily portable.Previous pretrained weights are kept frozen so the model is not as prone to catastrophic forgetting.It adds pairs of rank-decomposition weight matrices (called update matrices) to existing weights, and only trains those newly added weights. Low-Rank Adaptation of Large Language Models (LoRA) is a training method that accelerates the training of large models while consuming less memory.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed